Everything posted by joigus

-

What are you listening to right now?

A Sevillana. And she's singing with Andalusian pronunciation. Pepe Marchena: "La Rosa del Jardinero" (The Gardener's Rose). This is cante jondo (deep singing).

-

How can information (Shannon) entropy decrease ?

I haven't heard about it. I've just read a short review and it sounds interesting. Thank you. I would agree that entropy doesn't necessarily represent ignorance. Sometimes it's a measure of information contained in a system. An interesting distinction --that I'm not sure corresponds to professor Ben-Naim's criterion-- is between fine-grained entropy --which is to do with overall information content-- and coarse grained entropy --which is to do with available or controlled information. OTOH, entropy is the concept which seems to be responsible for more people telling the rest of the world that nobody has understood it better than they have. A distinguised example is Von Neumann*, and a gutter-level example is the unpalatable presence that we've had on this thread before you came to grace it with your contribution, Genady. *https://mathoverflow.net/questions/403036/john-von-neumanns-remark-on-entropy

-

How can information (Shannon) entropy decrease ?

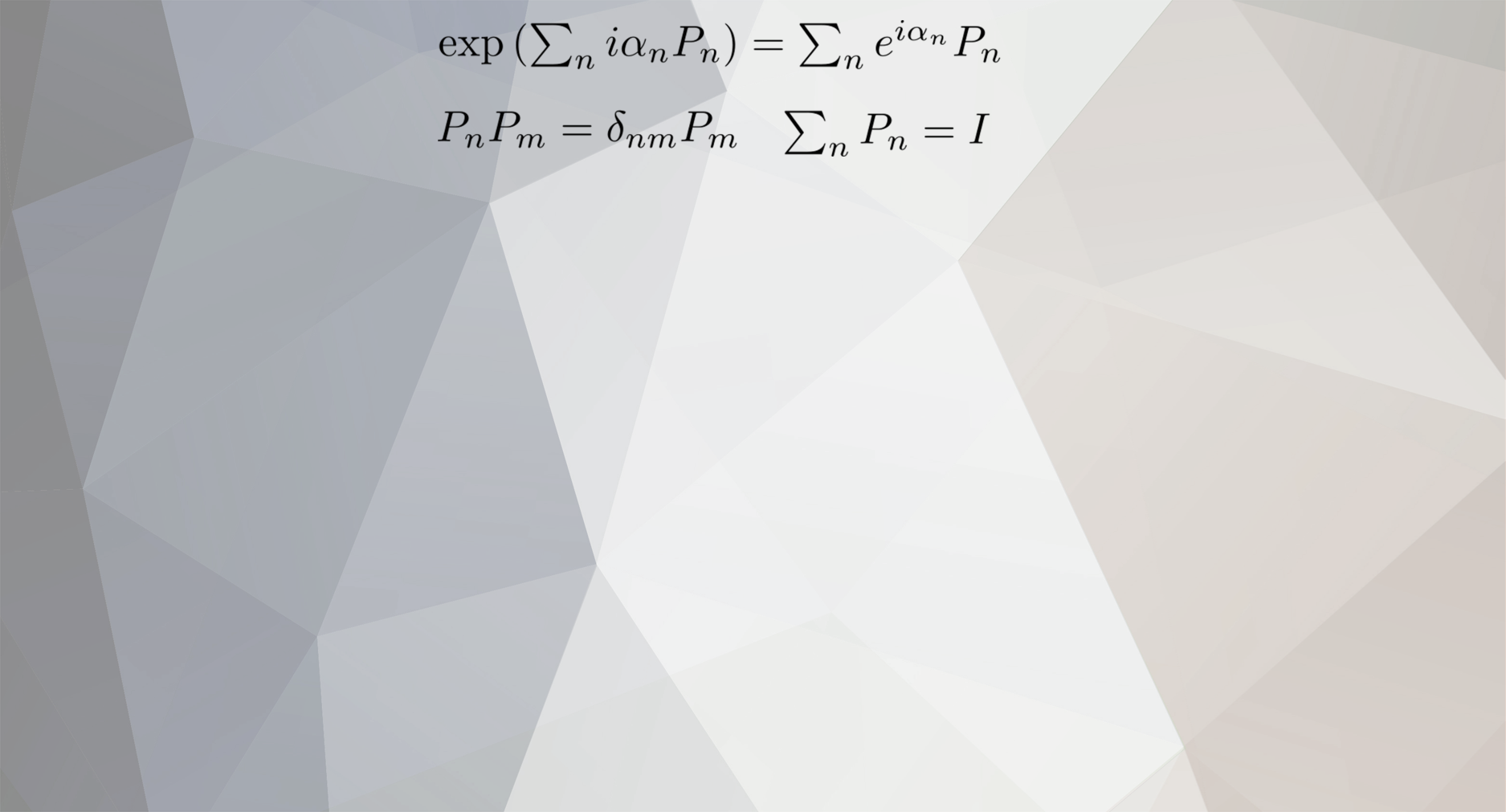

Thanks both for your useful comments. I'm starting to realise that the topic is vast, so fat chance that we can get to clarify all possible aspects. I'm trying to get up to speed in the computer-science concept of entropy beyond the trivial difference of a scale factor that comes from logs in base e to logs in base 2. It may also interesting to notice that Shannon entropy is by no means only applied to messages, channels, senders, and receivers: (with my emphasis, and from https://en.wikipedia.org/wiki/Entropy_(information_theory)#Relationship_to_thermodynamic_entropy.) In the meantime I've reminded myself as well that people are using entropy (whether base 2 or e) to discuss problems in evolution dynamics. See, e.g., https://en.wikipedia.org/wiki/Entropy_(information_theory)#Entropy_as_a_measure_of_diversity https://www.youtube.com/watch?v=go6tC9VSME4&t=280s Even in the realm of physics alone, there are quantum concepts, like entanglement entropy, quantum entropy a la Von Neumann, defined as a trace of matrices, or even entropy for pure states, \( -\int\left|\psi\right|^{2}\ln\left|\psi\right|^{2} \). The unifying aspect of all of them is that they can all be written one way or the other, schematically as, Entropy(1,2,...,n)=-Sum(probability)log(probability) for any partition into cells or states of your system that allow the definition of probabilities p(1), ..., p(n) Entropy, quite simply, is a measure of how statistically-flattened-out whatever system you're dealing with, that admits a partition into identifyiable cells that play the part of "states", that is extensive (additive) for independent systems when considered together, and concave with respect to partitions into events: Being so general, it seems clear why it has far outstripped the narrow limits of its historical formulation. Control parameters Control parameters are any parameters that you can change at will. In the case of an ideal gas, typically we refer to control parameters as the extensive variables of the system, like the volume and the energy (those would be, I think, analogous to input data, program, ROM, because you can change them at will. Other things are going on within the computer that you cannot see. Result of a computation is unpredictable, as well as the running state of a program. As to connection to physics, I suppose whether a particular circuit element is in a conducting state or interrupt one could be translated into physics. But what's important to notice, I think, is that entropy can be used in many different ways that are not necessarily very easy to relate to the physical concept (the one that always must grow for the universe as a whole.) As @studiot and myself have pointed out (I'm throwing in some other I'm not totally sure about): No analogue of thermodynamic equilibrium No cyclicity (related to ergodicity) => programs tend to occupy some circuits more likely than others No reversibility No clear-cut concept of open vs closed systems (all computers are open) But in the end, computers (I'm making no distinction with message-sending, so potentially I'm missing a lot here) are physical systems, so it would be possible in principle to write out their states as a series of conducting or non-conducting states subject to conservation of energy, and all the rest. Please, @Ghideon, let me keep thinking about your distinctions (1)-(4), but for the time being, I think physics has somehow been factored out of the problem in (1) and (2), but is not completely not there, if you know what I mean. It's been made irrelevant as a consequence of the system having been idealised to the extreme. In cases (3) & (4) there would be no way to not make reference to it. Going back to my analogy physics/computer science, it would go something like this: Input (control parameters: V, E) --> data processing (equation of state f(V,P,T)=0) --> output (other parameters: P,T,) Message coding would be similar, including message, public key, private key, coding function, etc. But it would make this post too long.

-

Which came first, the chicken or the egg?

I'm a stickler for clear definitions. I just don't know what this is doing on Brain Teasers and Puzzles.

-

How can information (Shannon) entropy decrease ?

I assume you mean that @studiot's system doesn't really change its entropy? Its state doesn't really change, so there isn't any dynamics in that system? It's the computing system that changes its entropy by incrementally changing its "reading states." After all, the coin is where it is, so its state doesn't change; thereby its entropy doesn't either. Is that what you mean? Please, give me some more time to react to the rest of your comments, because I think a bridge can be built between the physical concept of entropy, of which I know rather well, to the computer people like you, of which I'm just trying to understand better. Thank you for your inexhaustible patience, @Ghideon. I try to keep my entropy constant, but it's not easy.

-

Great Oxygenation Event: MIT Scientists’ New Hypothesis

No, I'm sure I made a mistake there. RuBisco is about Calvin-Benson cycle, right? Producing sugars. 😅 Thanks for catching me. OK. Thank you. I'm dabbling on chemistry/biochemistry, so some of the details went over my head, but I think I got the gist of it. Were those oxygenation events really that sudden? The banded iron formation suggests some kind of periodicity in that massive oxidation of the oceanic iron. Could a picture that you just sketched be compatible with some kind of periodicity? The closest I know from my past study of differential equations is the periodicity in populations that appears in models of competing species, like the Volterra model, which is about a predator-prey dynamics. Does a pattern like that make any sense at all in biochemistry of the oceans? I address this mainly to @CharonY and @exchemist, but feel free to answer other members, of course. Calvin-Benson always reminds me of underwear 😆

-

Great Oxygenation Event: MIT Scientists’ New Hypothesis

Very interesting thread and comments... RuBisco is the single most abundant protein on the biosphere. I would be surprised that it didn't have to do with major oxygenation events in the past. But the origins of this oxygenation event may be buried in complexity.

-

How can information (Shannon) entropy decrease ?

😆 I would seriously would like this conversation to get back on its tracks. I don't know what relevance monoidal categories would have in the conversation. Or functors and categories, or metrics of algorithmic complexity (those I think came up in previous but related thread and were brought from out of the blue by offended member.) Mentioned offended member then shifts to using terms as "efficient" or "surprising," apparently implying some unspecified technical sense. Summoning highfalutin concepts by name without explanation and dismissing everything else everyone is saying on the grounds that... well, that they don't live up to your expectations in expertise, I don't think is the most useful strategy. I think entropy can be defined at many different levels depending on the level of description that one is trying to achieve. In that sense, I think it would be useful to talk about control parameters, which I think say it all about what level of description one is trying to achieve. Every system (whether a computer, a gas, or a coding machine) would have a set of states that we can control, and a set of microstates, that have been programmed either by us or by Nature, that we can't see, control, etc. It's in that sense that the concept of entropy, be it Shannon's or Clausius/Boltzmann, etc. is relevant. It's my intuition that in the case of a computer, the control parameters are the bits that can be read out, while the entropic degrees of freedom correspond to the bits that are being used by the program, but cannot be read out --thereby the entropy. But I'm not sure about this and I would like to know of other views on how to interpret this. The fact that Shannon entropy may decrease doesn't really bother me because, as I said before, a system that's not the whole universe can have its entropy decrease without any physical laws being violated.

-

How can information (Shannon) entropy decrease ?

My code seems to have worked. True colours in full view.

-

Which came first, the chicken or the egg?

Samuel Butler Yes. As many have said or implied before, the egg is the arrangement of genetic material to be tested against the environment. The chicken is but the sequence of later developmental stages of that egg, set against different kinds of environments: pre-natal, peri-natal, young, reproductive, post-reproductive. There's no chicken that didn't come from an egg. There are thousand upon thousands of eggs that never make it to become a chicken.

-

How can information (Shannon) entropy decrease ?

What's with the quotation marks? You seem to imply that no word in a code like Swedish would be surprising. What word in Swedish am I thinking about now?

-

Examples of Awesome, Unexpected Beauty in Nature

I thought the last one was a Namib elephant, but I don't think it is. It's probably a big tusker from Tsavo, in Kenya. https://www.theguardian.com/environment/gallery/2019/mar/20/the-last-of-africas-big-tusker-elephants-in-pictures Thanks for the pictures. That's exactly what it looks like. Thank you.

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

As to scale symmetries in general, studying the invariance properties of a certain theory under re-scalings can be a useful tool, but I wouldn't try to read too much into it physically*. The reason is that, while symmetries as rotations and translations have a very transparent, very direct interpretation, that's not the case for scale transformations. Rotations can be easily viewed from the active point of view. I can rotate a piece of experimental equipment; I can rotate the whole laboratory (active transformations). I can think of extrapolating this operation to include the whole universe. In that purely theoretical, ideal, scenario, actually rotating the whole universe would be mathematically equivalent to the inverse passive transformation (simple re-labelling of coordinates) of my frame of reference. Same goes for translations. You can't do the same with scalings. IMO, you would have to be very careful to explain how these observers could tell that their scales change from point to point or region to region (continuously as you move from one to the other?). I think @studiot has made this point before, and what I'm doing basically is rephrasing, or ellaborating a little bit on what he said: That's what I meant by 'slippery slope.' *By 'physically' I mean considering different observers that 'see' different scales. How do they know?

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

Maybe he's somebody else's brother and she's somebody else's sister. But I think they're cousins. https://en.wikipedia.org/wiki/John_C._Baez#Family Anyway, he explains there why length and mass can't scale independently from each other.

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

The quote is due to Baez.

-

Why hasn't the earth cooled down by now?

Google: Search string: "half life of uranium 238" Google: Search string: "how abundant is uranium on earth" Google: Search string: "most abundant radioactive materials on earth" Wikipedia: https://en.wikipedia.org/wiki/Uranium Isotope Abundance Half-life (t1/2) Decay mode Product 232U syn 68.9 y SF – α 228Th 233U trace 1.592×105 y SF – α 229Th 234U 0.005% 2.455×105 y SF – α 230Th 235U 0.720% 7.04×108 y SF – α 231Th 236U trace 2.342×107 y SF – α 232Th 238U 99.274% 4.468×109 y α 234Th SF – β−β− 238Pu Radiation is dangerous, but how dangerous it is depends on how exposed you are to it, as well as the radiactive material. Radioactive materials are present in many rocks, as eg. granite, but not so concentrated that they will give you cancer in any noticeable time.

-

What is the Purpose of Life ?

Purpose is indistinguishable from the illusion of purpose.

-

Why is a fine-tuned universe a problem?

Right. The emphasis was meant on the 'big.' There was a time when we were new too, remember? It's been knocking at our door for so many decades that it still sounds new. You could say it's the future of physics, it's always been, and it always will be.

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

I'll take a closer look at it later, but let me tell you physics is not just about making sense. Lord Kelvin's theory of electromagnetic knots made perfect sense, yet it's not what Nature is like. I have some comments to make about this idea of observer-dependent scaling. It's akin to a slippery-slope kind of argument, but for good reasons. Maybe tomorrow.

-

Why is a fine-tuned universe a problem?

Ah, I missed this, @MigL. I'm sorry. Let me get back to you later. It's based on some musings by Leonard Susskind. During his lectures on supersymmetry, he laments that SUSY probably is telling us something very deep, but we still don't know what it is. I'm quoting him almost literally. Essentially it rests on the (mathematical) fact that the SUSY generators, that exchange boson for fermion, can be arranged in a way that produces space-time translations. Now that could be just a mathematical mirage, but it seems very profound. Another one is that, when you put superspace variables (anticommuting complex coordinates) together with space-time, the Lagrangians of relativistic quantum field theory appear as if by (mathematical) magic. That's what I mean by "really compelling." Now, I think you know me enough to know that I don't easily fall for 'big' new ideas that have to do with forcing the mathematics. I'm convinced that the way to go is to look at the mathematical form that we know to be right, while trying to interpret it in a way that opens the way to an extrapolation. I'm not sure I'm explaining myself very well. Maybe tomorrow. (Famous last words.)

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

https://math.ucr.edu/home/baez/lengths.html You don't contemplate quantum mechanics, that's why you don't understand. The world is quantum. \( \frac{\hbar}{mc} \) is a length. It's the length scale from which you must start making quantum relativistic corrections. You can't leave quantum mechanics out of the story. Otherwise your story is not about the real world. It's about a fantasy world. Solids are subject to quantum mechanics too.

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

Mass scales as inverse length. By together, I didn't mean the same way.

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

(My emphasis.) Who gave it? And are you a co-author? x-posted with Swansont. In any case, mass and length scale together due to relativity and quantum mechanics, so no, you can't pull this off. Unless you explain very good reasons why \( \hbar \) and c (speed of light) are irrelevant in physics.

-

What would happen to space if passage of time was accelerating? Equality principle. Similarity of empty space. A Shrinking matter theory that might actually work.

I think they're insurmountable. If the universe were made of just photons and neutrinos on a scale-invariant background, it would display scale invariance, but it isn't, so it doesn't. MigL has given a nice cosmological account that complements Markus'. I think the argument, when you consider the quantum theory, becomes even more involved, as you would have to prove that the beta function --the function that tells you how the interaction scales with energy, and thereby with length-- vanishes. I don't know how massless physicists would feel in a universe like that, but it's been known for a while that it doesn't work. I don't understand how the equivalence principle allows you to pull this off. If anything, the EP tells you that free-falling local observers cannot tell they're falling, so the standard model would be still there, in all its locally-valid glory, telling you the universe is not scale-invariant... I also hope you don't mean special-relativity length contraction & time dilation. That's nothing like scale invariance...

-

To All Women in Science

Emmy Noether's case is particularly poignant for me, because of the ratio (treatment she got)/(genius)x(human qualities). Plus the theorem that bears her name is my favourite of all mathematical physics. She seems to have been an extremely nice person too. But yes, there are many. Many who had it worse than Noether too. Absolutely. Standard bearers. Jane Goodal in particular is one of my heroes. I was in two minds about posting this. Most women prefer day-to-day action, rather than this kind of gestures. But then I thought, what the hell. I couldn't not do it.