Everything posted by joigus

-

Does some numerology intersect with standard mathematics?

Why would what , at best, is a pervesion of standard mathematics be a part of standard mathematics?

- The Official JOKES SECTION :)

-

How about the LHC and FCC?

Not taking a dive in a pool because there's, say, 10⁻¹⁰ chance of finding a shark in it, is not a grown-up concern IMO. It's more akin to night terrors. This is like not taking a dive in a pool because there's 10⁻¹⁰ chance of finding a shark in it.

-

Why you have to be so careful accepting answers from AI

I needed to highlight these two comments and sub-comments of them, as I think they're so close to the essence of what the problem might be if AI is given too much leeway in telling us what's next. Assigning statistical weights to conjectures, answers to hard questions, and the like, relies very heavily on previous answers to (as well as posing of) similar questions, never getting us necessarily anywhere closer to unexpected avenues of inquiry or further questions, or counter-arguments.

-

The Official JOKES SECTION :)

- How about the LHC and FCC?

This kind of thinking has a tendency to snowball that, as I remember, reached a peak somewhere around 2008, bordering into horror-sci-fi: https://cerncourier.com/a/the-day-the-world-switched-on-to-particle-physics/ I remember arguments both from theory (evaporation of black holes) and experiment (existence of high-energy particles from cosmic rays) putting the matter to rest.- Probability is not impervious to paradoxes

Depends on how you define a reality. It would be essentially different from 'red, blue, and green' reality if we have to make room for quantum mechanics of spin. But that would take us off on a tangent.- Probability is not impervious to paradoxes

I think you're getting confused here. Space itself is not of a statistical nature. Things going on in space are. Space is just a backcloth of everything else. You cannot set up a proper statistical question that involves only space with nothing in it. Wally can be here or Wally can be there. But there's nothing to be done with here and there without some kind of Wally.- Probability is not impervious to paradoxes

Ok. I must say I'm quite less sophisticated when it comes to probability that you probably picture me to be, and you yourself are. The cases you comment of disqualified horses, dead heats, etc, IMO, would be completely smoothed out to zero by means of the 'frequentist approach': They almost never happen. The way I always understood probabilities is: You first make a hypothesis based on symmetries, known features, engineering specs and so on, direct exploration, etc. Then you do thousands upon thousands of experiments and apply the 'frequency test'. That way, you see whether your statistical hypothesis was correct. In some cases, like physics, you have physical principles that allow you to not guess in the total dark. In your horse-race example, your hypothesis would be based on a priori conditions on the horses: Their physique, breed, biometrics, and so on. Then you would have them race with different riders, atmospheric conditions, etc. Something like that. I think it's fair to say Bayesian methods give you equal probabilities at first, but that's precisely because the first-order approach is to assume no bias, and then correct your hypothesis as you learn more about the different odds (the heart and soul of Bayesian methods or, as I like to say, probe more and more deeply into the sample space). So the first assignment of probabilities doesn't give you any better insight than the other ones. I think you're right in your conclusion. But I don't think it's because infinity has limits. I think it's because infinity is not a number, it's more of a topological nature (the boundary of all numbers), so you cannot reach it numerically, which is quite the opposite of what you said in words, even if your intuition might have been right.- Probability is not impervious to paradoxes

Yes. https://en.wikipedia.org/wiki/Frequentist_probability No. Please, explain.- Is this a proper application of sesquation and quotation? My first new non Prime hypothesis. Can it be applied to multivariable equations?

Just today, I was fumbling for the word 'tetration' as a binary operation. Thank you.- Probability is not impervious to paradoxes

In response to your first question: Yes, that's exactly what I mean by a frequentist definition. In response to your second question: I assume by 'my scenario' you mean the molecule-speed vs probability scenario...? In that case, F as absolute frequency (number of times it produced a certain value) would be, say, 1 or two, while the number of times it's been tried would be (ideally) infinitely many. And therefore, the relative frequency would be f = 1 or 2 / infinity = zero. => zero probability does not imply zero occurrences when infinitely many tries are involved. I hope we're talking about the same thing. If not, it's probably my fault, and I apologise. Please, point out to me, if you can, where you made this qualification, as it escaped me. It's a very interesting point to me, as I think many misunderstandings when talking odds come from this, as 'random' could mean Laplace (finite sample space), binomial, Poisson, Gaussian, or who knows what...- Probability is not impervious to paradoxes

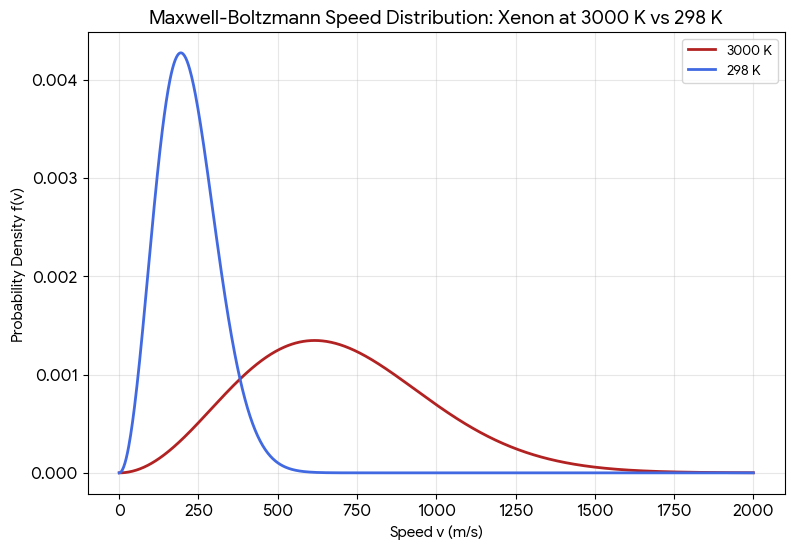

Therefore I should have said 'at T=3000K the Xenon molecule is much more random (much less predictable) than at T=298k. I hope I didn't make my argument completely un-understandable. Sorry. I sometimes think I may well have been misdiagnosed as 'cognitively normal' when I may well belong in the 'cognitively-exceptional' spectrum. For some uncanny reason, I tend to express thing the opposite way I mean to.- Why you have to be so careful accepting answers from AI

Yes. Context means a lot for us humans. You were right to say it's not a tool for the masses. I've recently thought of this metaphor: AI, if used properly, should become some kind of pseudopodium stemming from our intelligence, not a prosthesis of it. Unfortunately we see too many examples of the latter, and too few of the first.- Probability is not impervious to paradoxes

Yes, but simply declaring 'lack of information' doesn't determine the probability distribution, does it? Did you happen to take a look at Bertrand's circle paradox? In the case you provide, a relatively-simple change of variables to a certain s=f(pi) renders the probability distribution deterministic in s. If you're not given this information, every digit has equal probability of occurring (as far as we know, because nobody can decode pi in terms of digits), so it's a good generator for the prescription 'equal probability for every digit from 0 to 9). I draw your attention on the fact that 'equal probability for every digit from 0 to 9' is just one way of defining 'random' in this context. Yes, but (I insist) 'random' by itself doesn't mean much. Here's the distribution of probabilities for the speed of a Xenon molecule at temperatures, T = 298 K and T = 3000 K. Both are random, and yet, at T = 3000 K the Xenon-molecule speed is much less random (much more predictable) than at T = 298 K: I should have said 'much more random'. Sorry.- Why you have to be so careful accepting answers from AI

You have to work a lot on your prompt, and diagnose mistakes like these to rephrase your next prompt. And I would add, never venture into concepts that you know even the experts have not reached an agreement on. Machines lack context, while we are context machines. A simple question like, 'how old are you?' could be understood to mean 'how long have you as an individual been around?' or 'how long have you humans been around?'- Probability is not impervious to paradoxes

The infinite-monkeys is a metaphor to illustrate the arguably paradoxical nature of probability. In that case, you take an extremely unlikely event and flood your laboratory with attempts to obtain a succesful outcome. What paradox does it try to illustrate? That, in the limit, even an event with probability =0 is possible if there is a continuum of outcomes accesible. The metaphor makes the point clear even though no-matter-how-big a number of monkeys will never produce a continuum of outcomes (books written at random). But that has little to do with what I was trying to argue. Namely: That the word 'random' doesn't necessarily mean something precise in a number of cases.- Probability is not impervious to paradoxes

Maybe so, but not by a humorous observation on the nature of our expectations. 'Everything that can go wrong will go wrong' is no probabilistic law. Starting with: it's manifestly false. The nature of our expectations is quite irrelevant to the laws of probability anyway...- Probability is not impervious to paradoxes

Unfortunately, no. Murphy's law is not an actual law of probability, but a humorous observation on the nature of our expectations.- Why is there a Great Divide between animal designs? Never read anything about this anywhere!

I don't think @MigL will like the way this is turning...- Probability is not impervious to paradoxes

Well, the word 'random' is a tricky one. As I said, there is no unique way to define 'random'. People tend to think of random as synonimous of 'unbiased'. Bertrand's paradox --which I also mentioned before-- shows that the premise of randomness as one of total unbiasedness (equal probabilities for all the values of a variable within a range) gives different probabilities for different variables that equally describe the same problem. Namely: Consider an equilateral triangle that is inscribed in a circle. Suppose a chord of the circle is chosen at random. What is the probability that the chord is longer than a side of the triangle? Then Bertrand proceeds to calculate this probability by different methods, all equally unbiased, but with respect to different variables (two points chosen at random, a point and an angle, etc). The answer is different depending on which variables you choose. The illusion that 'random' means something precise comes from: 1) Choosing a discrete set of outcomes, and 2) Assuming there is a probability distribution that's 'written in stone', like, eg, Laplace's rule of equal probabilities, some principle of symmetry infering that (example, the fair coin), etc, so that assigning probabilities is reduced to a counting problem. Otherwise, we need some kind of law or fundamental principle that tells us what the distribution is, like we have in statistical physics, for example.- Why is there a Great Divide between animal designs? Never read anything about this anywhere!

Big arthropods were peculiar (if not exclusive) to the Carboniferous period, due to the high oxygen levels, rather than gravity. Number of appendages seems to be more of a developmental accident, as most members suggest. Embryonic development tends to follow body plans long past initiated.- Probability is not impervious to paradoxes

Yes, provided you are invited to just pick a letter. But even in that case, if you asked people to try to guess an answer that makes it correct, the sheer fact that people wanted to get it right would shift the probability distribution to more than .25 chance, only because they think that's the right answer! I think that's what @studiot meant when he compared this to the Monty Hall problem. I don't think it's a pure Monty Hall problem with conditional probability hidden in some semantic way. I think there's also a more-or-less hidden self-reference here that contributes to the paradox. Yes, but then you're assuming a probability distribution: .25, .25, .25, .25 and you cannot escape the paradox either. .25+.25 = .5 so .25 would no longer be 'correct'. It is designed in such a way that, if you want to get it right, you'll get it wrong.- Probability is not impervious to paradoxes

The way I see it, the most offending term in the posing of the problem is the mischievous use of the expression 'at random'. Whether we adopt a frequentist approach or an aprioristic one, distributions such us, eg, A: 31.7 % B: 19.2 % C: 16.1 % D: 33 % and, A: 12.5 % B: 23.9 % C: 11.2 % D: 52.4 % are exactly as 'at random' as each other. Too often, people confuse 'perfectly random behaviour' with 'equal probabilities' (Laplace). The best cautionary tale I know about this is Bertrand's paradox: https://en.wikipedia.org/wiki/Bertrand_paradox_(probability)- The Official JOKES SECTION :)

Dear Studiot, it would be shocking indeed that I recognised a character from a novel I haven't read! I love British culture, but I cannot compete with you in that respect. I'm looking up Wyndham as we speak. Mind you, I must keep an eye on domestic affairs, literary and otherwise. - How about the LHC and FCC?

Important Information

We have placed cookies on your device to help make this website better. You can adjust your cookie settings, otherwise we'll assume you're okay to continue.