Everything posted by joigus

- The Volume Problem

-

The equation of human personality

If there are such things as personality disorders, and personality disorders there are, there must perforce be something we can call a personality, that can ultimately be attached to patterns of behaviour. I would suppose we speak of personality disorders when the patterns of behaviour of a person make them dangerous to themselves and/or others, or unfit to lead a normal reproductive life, socially harmonious life, etc. And I would suppose we speak of somebody's personality as such when patterns of behaviour --either considered healthy or pathological-- have enough differences among them that attaching a personality to a particular individual makes some empirical sense. Different aspects of personality are being addressed with several degrees of success by neuroscience, with considerable rate of ongoing success, I would say. But personality is extremely complicated. Genetics-hardwired responses, environment-development, environment-induced behaviour imprinting ulterior constraints on molecular mechanisms, etc. It's hugely complicated. What you won't find is a clear-cut definition of personality, IMO. Personality has emergence written all over it.

-

Equilibrium between [math]SO_3 [/math](product) and [math]SO_2,O_2[/math] (reactants)

Ah, OK. Got it! So to me what's going on, schematically is, 2SO2 + O2 <> 2SO3 ------------------------------------------------------------------------------ First equilibrium: 0.3 0.2 1.5 Added SO2 (out of equilibrium): 0.3+0.1x 0.2 1.5 Second equilibrium: 0.3+0.1x-0.1 0.2-0.05 1.5+0.1 So I think the calculation should be, \[ 125=\frac{1.6^{2}}{\left(0.2+0.1x\right)^{2}\left(0.2-0.05\right)} \] Which is, I think, what @Dhamnekar Win,odd meant when they wrote, That is, 0.3-0.1+0.1x instead of 0.3-0.1 +x Am I right?

-

Equilibrium between [math]SO_3 [/math](product) and [math]SO_2,O_2[/math] (reactants)

Silly me. You're right. So the total mass is constant? I didn't see that in the initial statement. By, "How many moles of sulfur dioxide must be forced into the reaction vessel", I understood new moles of sulfur dioxide are added to the equilibrium. From what you say, the new SO3 must come from the pre-existing equilibrium, right? I had difficulties implying that from the statement. Sorry if I sound obtuse.

-

Equilibrium between [math]SO_3 [/math](product) and [math]SO_2,O_2[/math] (reactants)

Oh, I see; all of them are necessarily gases. The bit I don't understand is, what assumption from the exercise's statement is at the basis of O2 going down from 0.2 mol/L to 0.2-0.05 = 0.15 mol/L when the extra SO2 is put there? I seem to be missing something here...

-

Equilibrium between [math]SO_3 [/math](product) and [math]SO_2,O_2[/math] (reactants)

Flawless application of Le Chatelier's principle. I've no objection to there being good practical reasons to remove oxygen, and I totally trust @exchemist with this. But, why do you assume oxygen reducing its concentration --as per the exercise's statement? Are you re-calculating concentrations due to total volumes changing? Can you specify the whole chemical reaction equation with the phases? I'm just curious.

-

The Volume Problem

I suppose you mean 0*infinity= a finite quantity; "infinity" representing rate of change, and 0 representing elapsed time. 2*infinity=0 certainly doesn't make sense. If your question is the first one, it does make mathematical sense with the proper auxiliary qualifications (having to do with limits), but it's too idealised to correspond to a real situation.

-

Badges in Scienceforums.net

I've seen more badgers than badges around here. Generally they don't last long.

-

Examples of Awesome, Unexpected Beauty in Nature

Another piece of technicolor nature: https://www.atlasobscura.com/places/cano-cristales From Wikipedia: https://en.wikipedia.org/wiki/Caño_Cristales

-

Badges in Scienceforums.net

For the sake of documentation,

-

Physicists create compressible optical quantum gas

The photons wouldn't resist compression; they would suddenly fall down into the same degenerate state. They'd form a Bose condensate. It's a "do the opposite" story. Fermions hate degeneracy; photons just love it.

-

Validity of the claim that Will Smith "could've killed" Chris Rock

A joke is a joke; if it's not funny, so much the worse for the joke, and the joker. But I don't think for a minute Chris Rock's life was in danger. Com'on.

-

Physicists create compressible optical quantum gas

I think energy itself is not enough to "overcome" Pauli's exclusion principle, and entropy must be playing a strong role. If all those neutrons tried to jump to the same gravity-excited level, they would still be subject to the PEP. It's (probably) because black holes have enormous entropies that this is possible, I think. By "overcome Pauli's exclusion principle" I mean to create enough entropy that quantum degeneracy is broken. Sorry, you're right. Quantum degeneracy is never broken. To be more precise: that there are so many close-by states available that, entropy being so high, quantum degeneracy doesn't have a fighting chance. A similar argument is sketched here: https://physics.stackexchange.com/questions/93988/does-black-hole-formation-contradict-the-pauli-exclusion-principle Tell me what you think.

-

Validity of the claim that Will Smith "could've killed" Chris Rock

LOL. By the way, what a master of self-control and cool, cool man, Chris Rock, in every sense of the word. Kudos, Chris. My respect and admiration.

-

Physicists create compressible optical quantum gas

"Degeneracy" in quantum mechanics means that states that differ in the values of other quantum numbers have the same energy.

-

Physicists create compressible optical quantum gas

"The photons begin to overlap" means that the photons, bosons that they are, begin to favour forming a common state (Bose condensation). The thing going on in BH's is the opposite quantum-degeneracy force: neutrons are fermions, so they cannot be in the same quantum state. In the case of BH formation, quantum-degeneracy force is overcome by gravitation.

-

How can information (Shannon) entropy decrease ?

Thanks!!! These notes are quite more complete than the video.

-

How can information (Shannon) entropy decrease ?

Yeah, that's a beautiful idea, and I think it works. You can sub-divide also reversible processes into infinitesimally small irreversible sub-processes, and prove it for them too. I think it was very soon that people realised that entropy must be a state function, and the arguments were very similar. I tend to look at these things more from the point of view of statistical mechanics. Boltzmann's H theorem has suffered waves of criticism through the years, but it seems to me to be quite robust. https://en.wikipedia.org/wiki/H-theorem#Criticism_and_exceptions I wouldn't call these criticisms "moot points," but I think all of them rest on some kind of oversimplification of physical systems. Note that some members may find interesting: I've been watching a lecture by John Baez dealing with Shannon entropy, and the second principle of thermodynamics in the context of studying biodiversity. It seems that people merrily use Shannon entropy to run ecosystem simulations. Baez says there is generally no reason to suppose that biodiversity-related Shannon entropy reaches a maximum, but there are interesting cases where this is true. Namely, when there is a dominant, mutually interdependent cluster of species in the whole array of initial species.

-

Exiobiology and Alien life:

My thoughts exactly.

-

Is Torture Ever Right ?

Yeah, there's an element of revenge there, I think. Tit for tat is very strongly etched in our behaviours, biologically. It's very hard to let go of it. And thanks, DR.

-

Is Torture Ever Right ?

Brilliant points there!

-

How can information (Shannon) entropy decrease ?

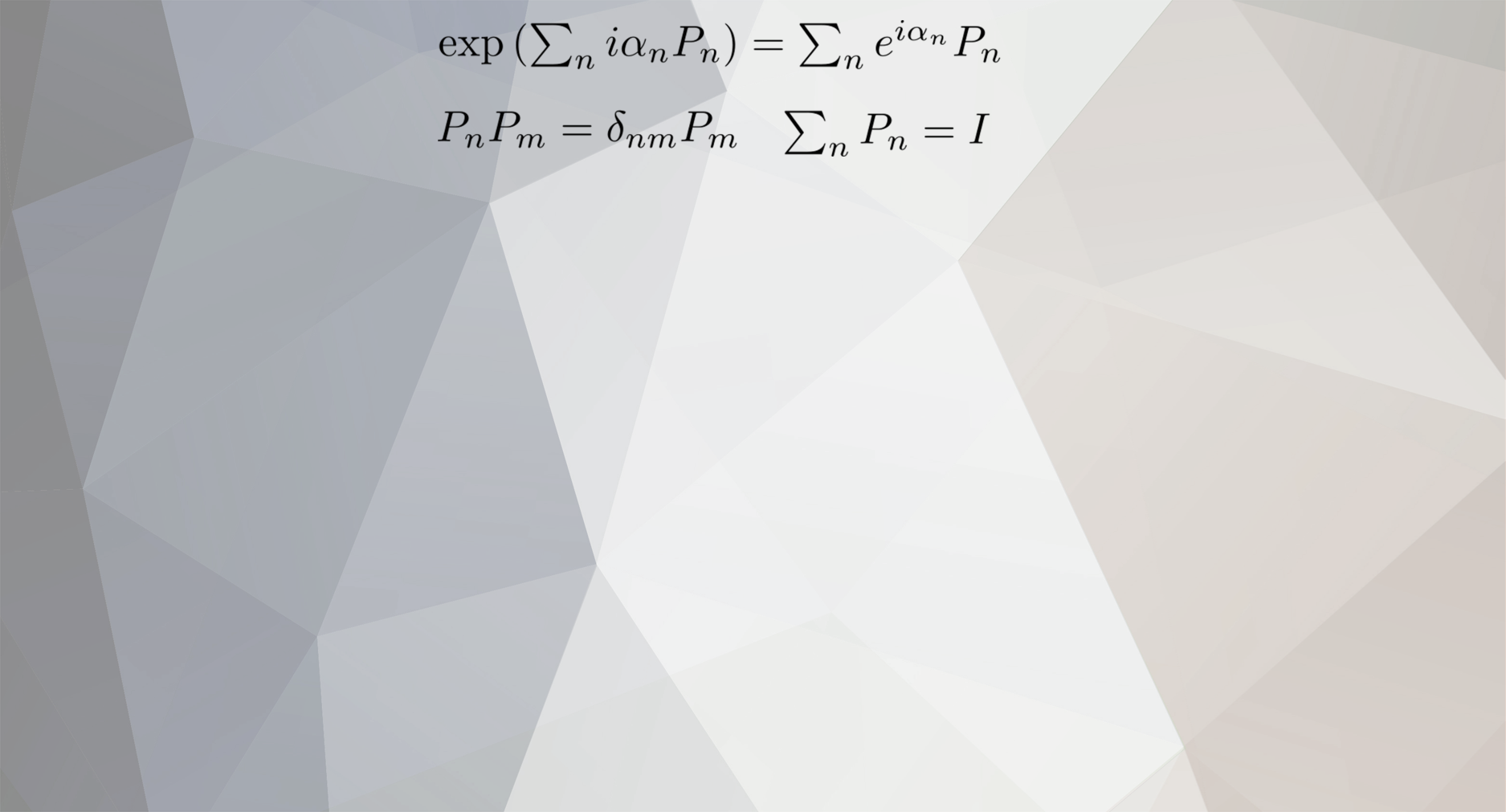

Actually, I don't think you're overthinking this at all. It does go deep. The \( P\left(\alpha,t\right) \)'s must be a priori probabilities or propensities and do not correspond to equilibrium states at all. I'm not familiar with this way of dealing with the problem. The way I'm familiar with is this: These P(α,t) 's can be hypothesized by applying the evolution equations (classical or quantum) to provide you with quantities that play the role of evolving probabilities. This is developed in great detail, e.g., in Balescu. You attribute time-independent probabilities to the (e.g. classical mechanics) initial conditions, and then you feed those initial conditions into the equations of motion to produce a time flow: \[q_{0},p_{0}\overset{\textrm{time map}}{\mapsto}q_{t},p_{t}=q\left(q_{0},p_{0};t\right),p\left(q_{0},p_{0};t\right)\] That induces time map onto dynamical functions: \[A\left(q_{0},p_{0}\right)\overset{\textrm{time map}}{\mapsto}A\left(q_{t},p_{t}\right) \] So dynamics changes dynamical functions, not probabities. But here's the clever bit: We now do a tradeoff between averages of time-evolving variables weighed against a fixed probability density of initial conditions, and averages of fixed variables weighed against a time-evolving probability density on phase space. All of this is done by introducing a so-called Liouville operator that can be written in terms of the time-translation generator, the Hamiltonian, through the Poisson bracket. This Liouville operator produces the evolution of dynamical functions: \[\left\langle A\right\rangle \left(t\right)=\int dq_{0}dp_{0}\rho\left(q_{0},p_{0}\right)e^{-L_{0}t}A\left(q_{0},p_{0}\right)\] Because of the properties of this Liouville operator, you can swap its action by using integration by parts on this first-order Liouville differential operator and get, \[\int dq_{0}dp_{0}e^{L_{0}t}\rho\left(q_{0},p_{0}\right)A\left(q_{0},p_{0}\right)=\int dq_{0}dp_{0}\rho\left(q_{0},p_{0}\right)e^{-L_{0}t}A\left(q_{0},p_{0}\right)\] You can think of \(e^{L_{0}t}\rho\left(q_{0},p_{0}\right)\) a time-dependent probability density; while \(e^{-L_{0}t}A\left(q_{0},p_{0}\right)\) can be thought of as time-dependent dynamical functions. For all of this to work, the system should be well-behaved; meaning that it must be ergodic. Ergodic systems are insensitive to initial conditions. I'm sure my post leaves a lot to be desired, but I've been kinda busy lately.

-

How can information (Shannon) entropy decrease ?

OK, I've been thinking about this one for quite a while and I can't make up my mind whether you're just overthinking this or pointing out something deep. I'm not sure this is what's bothering you, but for the \( P\left( \alpha \right) \) to be well-defined probabilities they should be some fixed frequency ratios to be checked in an infinitely-many-times reproducible experiment. So this \( -\int P\left(\alpha\right)\ln P\left(\alpha\right) \) already has to be the sought-for entropy, and not some kind of weird thing \( -\int P\left(\alpha,t\right)\ln P\left(\alpha,t\right) \). IOW, how can you even have anything like time-dependent probabilities? I'm not sure that's what you mean, but it touches on something that I've been thinking for a long time. Namely: that many fundamental ideas that we use in our theories are tautological to a great extent in the beginning, and they start producing results only when they're complemented with other ancillary hypotheses. In the case of the second principle "derived" from pure mathematical reasoning, I think it's when we relate it to energy and number of particles, etc., and derive the Maxwell-Boltzmann distribution, that we're really in business.

-

How can information (Shannon) entropy decrease ?

Thanks, @studiot. From skimming through the article I get the impression that prof. Beh-Naim goes a little bit over the top. He must be a hard-line Platonist. I'm rather content with accepting that whenever we have a system made up of a set of states, and we can define probabilities, frecuence of occupation, or the like for these states; then we can meaningfully define an entropy. What you're supposed to do with that function is another matter and largely depends on the nature of the particular system. In physics there are constraints that have to do with conservation principles, which winds up giving us the Maxwell-Boltzmann distribution. But it doesn't bother me that it's used in other contexts, even in physics. I'll read it more carefully later.

-

What are you listening to right now?

Yeah, they wish!! All Andalusian horses are Andalusian; but not all Andalusians are horses, as you well know. Amen. 🤣🤣🤣