-

"What it is like to see the color red"

Snark suits you so well. Have you a substantive argument? Of course not. Else you'd have made it. I mentioned Mary's Room to @StringJunky because the argument challenges the claim he made, whether that link was given earlier or not.

-

"What it is like to see the color red"

Aren't you forgetting subjective experience? You can know everything there is to know about the physics of light and how the brain processes signals from the optic nerve, yet know nothing at all about "what it's like" to see the color red. There's a famous philosophical thought experiment on this subject, Mary's Room. Mary is a neuroscientist who knows everything there is to know about photons, wavelengths, the biochemistry of the retina and the optic nerve and the optical processing regions of the brain. She can tell you exactly what happens, down to the molecule, when red light enters the eye. Thing is, she's spent her entire life in a room painted black and white. Her computer screen is monochrome. She has never seen color. One day, she's allowed out of the room. She experiences color for the first time, and now knows something she did not know before: What it's like to see color. She now has knowledge that she did not previously have. This shows that qualia, the subjective experience of the senses, constitutes knowledge. There is knowledge that can not be obtained from mere understanding of physical processes. https://en.wikipedia.org/wiki/Knowledge_argument Of course philosophers have objections, and this is not the last word on the subject. But to say, as you did, that "The perception of colour has three elements, I think: Wavelength of incident light, wavelength of the reflected light and then how the brain processes it," missed the most important element of perception: subjective experience. You can not account for that by knowing only of the physical mechanisms of the photons and the brain.

-

Artificial Consciousness Is Impossible

Evidence of what? Is there a paragraph missing? I didn't see a thesis being proposed. In any event, your thought experiment is subject to the gravity argument. Running a simulation of gravity in a computer doesn't attract nearby bowling balls (beyond the attraction caused by the mass of the computer itself). Nor would a simulation of a brain necessarily have a mind. We already have pretty good chatbots, nobody thinks they're conscious. There's another commonly refuted point in your argument. We don't make flying machines by duplicating birds molecule by molecule. Airplanes fly by different mechanisms than birds do; and it's likely that emulating a brain part by part is not the way to implement artificial consciousness.

-

Suggestions for using AI

All, I could not resist posting this video of a public-spirited philosopher making a subtle critique of the concept of self-driving cars.

-

Math branch on finding similarities to numbers.

That's the point of the link I supplied. Calculus is the study of continuous change. Discrete calculus is the study of how individual discrete data points change. It's what you asked about. If you meant something else, perhaps you can be more clear. Another thought is that you might mean the "continuous-ization" of discrete functions. For example the famous factorial function n! = 1 x 2 x 3 x ... n is only defined for positive integers. But we can extend it to the gamma function which is valid for arbitrary real and complex numbers and is continuous. Is that the kind of thing you had in mind?

-

Math branch on finding similarities to numbers.

https://en.wikipedia.org/wiki/Discrete_calculus

-

Concerning Infinity (of course)

Oops I'm terribly sorry. You were replying to BB. For some reason I got confused and thought that was BB replying to me. My bad. Nevermind whatever I wrote.

-

Concerning Infinity (of course)

Why is that a question? What do my own personal capabilities have to do with this? All that matters is that I can prove that the limit of the sequence 1/2, 3/4, 7/8, ... is 1. I truly don't understand why, having acknowledged this point, you are still making up meaningless questions. "Getting to the end," a meaningless concept, has been rendered irrelevant by the formal definition of a limit. I agree to no such thing. It's a philosophical question. Before the universe existed, were there numbers? Where were they? In the universe? Can you point to them? Identify their location? You are stating as a fact a matter of philosophy that you can't possibly hope to prove. According to some, yes. According to others, no. How about the game of chess? It's a formal system with precise rules. Did it exist before there were people? You've entirely changed the subject, I don't know why. Your belief is called mathematical Platonism. https://plato.stanford.edu/entries/platonism-mathematics/

-

Concerning Infinity (of course)

The purpose of the formalization is so that we don't have to think about meaningless questions like that. 1/n gets arbitrarily close to 0 as n gets large, that's the epsilon-N idea. So we define 0 as the limit of 1/n because it satisfies the epsilon-N condition. Then we don't have to confuse ourselves with unanswerable riddles like, "Does it ever reach 0?" Well actually it doesn't, since for any natural number n, 1/n is not 0. But it gets arbitrarily close. That's the idea of the limit concept. It avoids the meaningless questions. I don't know why you say it "seems like the argument has become whether infinite functions reach their limits." On the contrary, the formalization makes those kinds of questions irrelevant.

-

Concerning Infinity (of course)

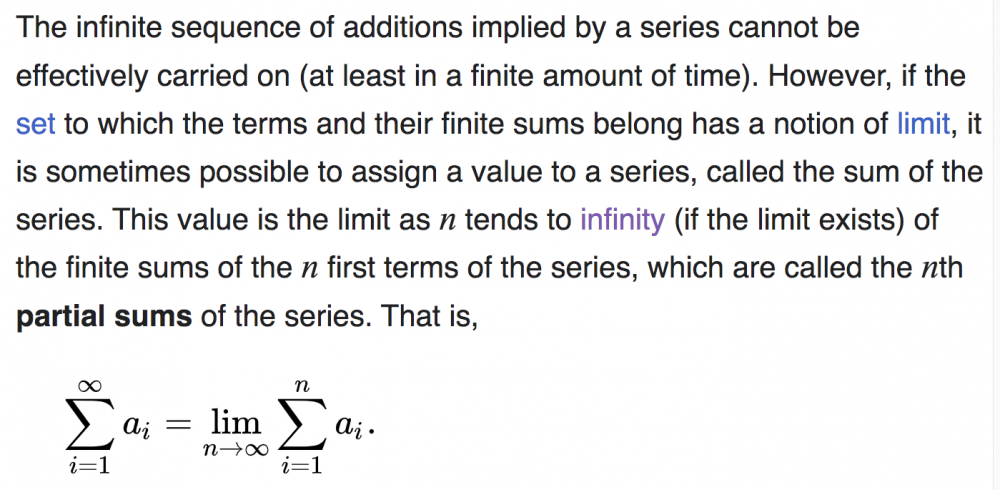

No, we use the partial sums trick to define the sum of an infinite series. As you note, the limit of a sequence is given by the epsilon-N definition. That's the right definition. (ps) The absolute value should be LESS THAN epsilon. Right, the sum of 1/2^n is "obviously" 1. Now if someone says to us, well I don't believe that, how can you make MATH out of it? How can you lay down some basic principles from which the fact that the sum is 1 will follow LOGICALLY? And THAT's what the limit of the partial sums definition is. It's a way of FORMALIZING what we already see must be true. In other words we already know what we want the answer to be. The partial sums are a clever way of constructing a framework in which what we "know" to be true, can be logically proven to be true. Does that help? Are we converging, no pun intended, to understanding? The partial sums are a way of formalizing our intuition. Exactly in the same way that the epsilon-N definition formalized our intuition that the limit of the sequence 1/2, 3/4, 7/8, ... should be 1. We already know what answer we want to get. The epsilon-N definition is a formalization that lets us logically derive the answer we wanted to get in the first place. In a sense, this whole business is an inversion of how people usually see math. People think that in math we have a problem and we want to find the answer. But in many cases, we already know the what the answer should be, and we want to create the math that gives us that answer!

-

Concerning Infinity (of course)

Because that is how they define the sum of an infinite series. We want to define the sum 1/2 + 1/4 + 1/8 + ... But we don't know how to add up infinitely many things. So we DEFINE the sum to be the limit of the sequence of partial sums. The sequence of partial sums is 1/2, 3/4, 7/8, 15/16, ... Can you see that? Ok now the sequence of partial sums happens to have the limit 1. That follows from the definition of the limit of a sequence. Do we perhaps have to review that? That's actually the trickiest part of all this. Once we have that, the rest is easy. The sequence of partial sums has the limit 1. We are not defining 1 differently, it's the same old familiar number 1. We are noting that the limit of the sequence of partial sums is 1. Then, since our goal was to somehow define the infinite sum, we define the sum of the series 1/2 + 1/4 + 1/8 + ... to be whatever the limit of the sequence of partial sums is, which in this case happens to be 1. Is that any more clear? Once we know what the limit of the sequence of partial sums is, we just make an arbitrary rule to define the sum of the series as the limit of the sequence of partial sums. Only in this case it turns out to not really be arbitrary, but actually rather clever ... since we've found a sensible way to define the sum of an infinite series. The reason it's sensible, is that the sum of that series should be 1 if it's anything at all; and now we've found a way to define it logically so that it works out.

-

Concerning Infinity (of course)

Step 1: The limit of the sequence 1/2, 3/4, 7/8/ 15/16, ... is 1 Step 2: The sum of an infinite series is defined as the limit of the sequence of partial sums. Step 3: The infinite series 1/2 + 1/4 + 1/8 + 1/16 + ... has the associated sequence of partial sums 1/2, 3/4, 7/8, 15/16, ... Step 4. Therefore the sum of the infinite series 1/2 + 1/4 + 1/8 + 1/16 + ... is 1, by Step 2. Which part of that logic is giving you trouble? If you can focus on the logic of these steps you will understand the process. You can have private intuitions about "the end" or whatever your intuitions may be, but when doing math, you need to focus on the math itself.

-

Concerning Infinity (of course)

Yes. The partial sums of 1/2 + 1/4 + 1/8 + ... are 1/2, 3/4, 7/8, ... and the limit of that sequence of partial sums is 1. So the sum of the original series is 1 by definition. Definitions can't be correct or incorrect, only useful or not, insightful or not. The entire point of the formal definition is to bypass meaningless and unanswerable questions involving "the end." There are no answers to those kinds of speculations nor is there really any meaning in them. Instead, we substitute the clever definition that allows us to formally prove that the sum of 1/2^n is 1, and we let everyone have their own private intuitions as long as we agree to use the formalism.

-

Concerning Infinity (of course)

The notation says that the sum is defined as the limit of the partial sums. It's defined to be 1, the limit of the partial sums. That's actually the clever part of the definition. We can't make sense of "what is the sum after infinitely many operations?" or "Isn't there a tiny little bit left over?" and so forth. The limit definition avoids those problems by providing a precise definition of the sum of an infinite series.

-

Concerning Infinity (of course)

wtf

Senior Members

-

Joined

-

Last visited