Everything posted by wtf

-

"What it is like to see the color red"

Snark suits you so well. Have you a substantive argument? Of course not. Else you'd have made it. I mentioned Mary's Room to @StringJunky because the argument challenges the claim he made, whether that link was given earlier or not.

-

"What it is like to see the color red"

Aren't you forgetting subjective experience? You can know everything there is to know about the physics of light and how the brain processes signals from the optic nerve, yet know nothing at all about "what it's like" to see the color red. There's a famous philosophical thought experiment on this subject, Mary's Room. Mary is a neuroscientist who knows everything there is to know about photons, wavelengths, the biochemistry of the retina and the optic nerve and the optical processing regions of the brain. She can tell you exactly what happens, down to the molecule, when red light enters the eye. Thing is, she's spent her entire life in a room painted black and white. Her computer screen is monochrome. She has never seen color. One day, she's allowed out of the room. She experiences color for the first time, and now knows something she did not know before: What it's like to see color. She now has knowledge that she did not previously have. This shows that qualia, the subjective experience of the senses, constitutes knowledge. There is knowledge that can not be obtained from mere understanding of physical processes. https://en.wikipedia.org/wiki/Knowledge_argument Of course philosophers have objections, and this is not the last word on the subject. But to say, as you did, that "The perception of colour has three elements, I think: Wavelength of incident light, wavelength of the reflected light and then how the brain processes it," missed the most important element of perception: subjective experience. You can not account for that by knowing only of the physical mechanisms of the photons and the brain.

-

Artificial Consciousness Is Impossible

Evidence of what? Is there a paragraph missing? I didn't see a thesis being proposed. In any event, your thought experiment is subject to the gravity argument. Running a simulation of gravity in a computer doesn't attract nearby bowling balls (beyond the attraction caused by the mass of the computer itself). Nor would a simulation of a brain necessarily have a mind. We already have pretty good chatbots, nobody thinks they're conscious. There's another commonly refuted point in your argument. We don't make flying machines by duplicating birds molecule by molecule. Airplanes fly by different mechanisms than birds do; and it's likely that emulating a brain part by part is not the way to implement artificial consciousness.

-

Suggestions for using AI

All, I could not resist posting this video of a public-spirited philosopher making a subtle critique of the concept of self-driving cars.

-

Math branch on finding similarities to numbers.

That's the point of the link I supplied. Calculus is the study of continuous change. Discrete calculus is the study of how individual discrete data points change. It's what you asked about. If you meant something else, perhaps you can be more clear. Another thought is that you might mean the "continuous-ization" of discrete functions. For example the famous factorial function n! = 1 x 2 x 3 x ... n is only defined for positive integers. But we can extend it to the gamma function which is valid for arbitrary real and complex numbers and is continuous. Is that the kind of thing you had in mind?

-

Math branch on finding similarities to numbers.

https://en.wikipedia.org/wiki/Discrete_calculus

-

Concerning Infinity (of course)

Oops I'm terribly sorry. You were replying to BB. For some reason I got confused and thought that was BB replying to me. My bad. Nevermind whatever I wrote.

-

Concerning Infinity (of course)

Why is that a question? What do my own personal capabilities have to do with this? All that matters is that I can prove that the limit of the sequence 1/2, 3/4, 7/8, ... is 1. I truly don't understand why, having acknowledged this point, you are still making up meaningless questions. "Getting to the end," a meaningless concept, has been rendered irrelevant by the formal definition of a limit. I agree to no such thing. It's a philosophical question. Before the universe existed, were there numbers? Where were they? In the universe? Can you point to them? Identify their location? You are stating as a fact a matter of philosophy that you can't possibly hope to prove. According to some, yes. According to others, no. How about the game of chess? It's a formal system with precise rules. Did it exist before there were people? You've entirely changed the subject, I don't know why. Your belief is called mathematical Platonism. https://plato.stanford.edu/entries/platonism-mathematics/

-

Concerning Infinity (of course)

The purpose of the formalization is so that we don't have to think about meaningless questions like that. 1/n gets arbitrarily close to 0 as n gets large, that's the epsilon-N idea. So we define 0 as the limit of 1/n because it satisfies the epsilon-N condition. Then we don't have to confuse ourselves with unanswerable riddles like, "Does it ever reach 0?" Well actually it doesn't, since for any natural number n, 1/n is not 0. But it gets arbitrarily close. That's the idea of the limit concept. It avoids the meaningless questions. I don't know why you say it "seems like the argument has become whether infinite functions reach their limits." On the contrary, the formalization makes those kinds of questions irrelevant.

-

Concerning Infinity (of course)

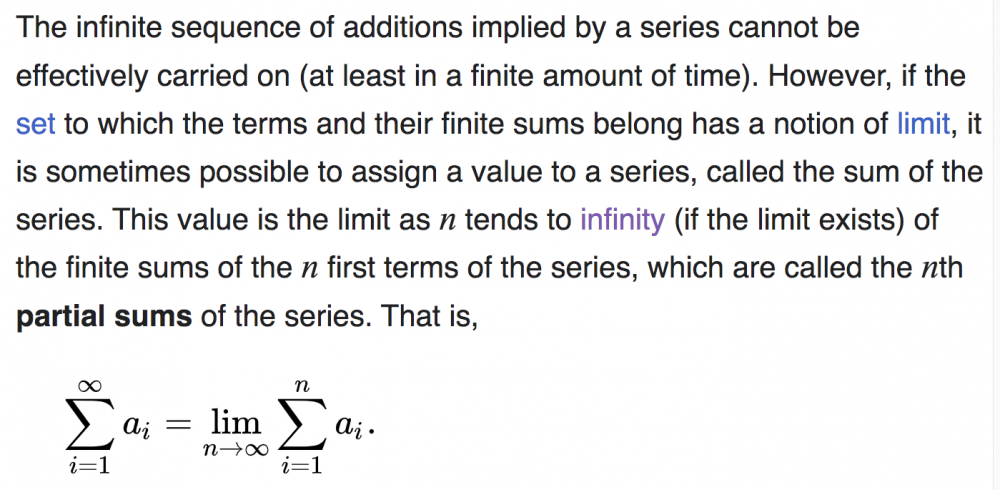

No, we use the partial sums trick to define the sum of an infinite series. As you note, the limit of a sequence is given by the epsilon-N definition. That's the right definition. (ps) The absolute value should be LESS THAN epsilon. Right, the sum of 1/2^n is "obviously" 1. Now if someone says to us, well I don't believe that, how can you make MATH out of it? How can you lay down some basic principles from which the fact that the sum is 1 will follow LOGICALLY? And THAT's what the limit of the partial sums definition is. It's a way of FORMALIZING what we already see must be true. In other words we already know what we want the answer to be. The partial sums are a clever way of constructing a framework in which what we "know" to be true, can be logically proven to be true. Does that help? Are we converging, no pun intended, to understanding? The partial sums are a way of formalizing our intuition. Exactly in the same way that the epsilon-N definition formalized our intuition that the limit of the sequence 1/2, 3/4, 7/8, ... should be 1. We already know what answer we want to get. The epsilon-N definition is a formalization that lets us logically derive the answer we wanted to get in the first place. In a sense, this whole business is an inversion of how people usually see math. People think that in math we have a problem and we want to find the answer. But in many cases, we already know the what the answer should be, and we want to create the math that gives us that answer!

-

Concerning Infinity (of course)

Because that is how they define the sum of an infinite series. We want to define the sum 1/2 + 1/4 + 1/8 + ... But we don't know how to add up infinitely many things. So we DEFINE the sum to be the limit of the sequence of partial sums. The sequence of partial sums is 1/2, 3/4, 7/8, 15/16, ... Can you see that? Ok now the sequence of partial sums happens to have the limit 1. That follows from the definition of the limit of a sequence. Do we perhaps have to review that? That's actually the trickiest part of all this. Once we have that, the rest is easy. The sequence of partial sums has the limit 1. We are not defining 1 differently, it's the same old familiar number 1. We are noting that the limit of the sequence of partial sums is 1. Then, since our goal was to somehow define the infinite sum, we define the sum of the series 1/2 + 1/4 + 1/8 + ... to be whatever the limit of the sequence of partial sums is, which in this case happens to be 1. Is that any more clear? Once we know what the limit of the sequence of partial sums is, we just make an arbitrary rule to define the sum of the series as the limit of the sequence of partial sums. Only in this case it turns out to not really be arbitrary, but actually rather clever ... since we've found a sensible way to define the sum of an infinite series. The reason it's sensible, is that the sum of that series should be 1 if it's anything at all; and now we've found a way to define it logically so that it works out.

-

Concerning Infinity (of course)

Step 1: The limit of the sequence 1/2, 3/4, 7/8/ 15/16, ... is 1 Step 2: The sum of an infinite series is defined as the limit of the sequence of partial sums. Step 3: The infinite series 1/2 + 1/4 + 1/8 + 1/16 + ... has the associated sequence of partial sums 1/2, 3/4, 7/8, 15/16, ... Step 4. Therefore the sum of the infinite series 1/2 + 1/4 + 1/8 + 1/16 + ... is 1, by Step 2. Which part of that logic is giving you trouble? If you can focus on the logic of these steps you will understand the process. You can have private intuitions about "the end" or whatever your intuitions may be, but when doing math, you need to focus on the math itself.

-

Concerning Infinity (of course)

Yes. The partial sums of 1/2 + 1/4 + 1/8 + ... are 1/2, 3/4, 7/8, ... and the limit of that sequence of partial sums is 1. So the sum of the original series is 1 by definition. Definitions can't be correct or incorrect, only useful or not, insightful or not. The entire point of the formal definition is to bypass meaningless and unanswerable questions involving "the end." There are no answers to those kinds of speculations nor is there really any meaning in them. Instead, we substitute the clever definition that allows us to formally prove that the sum of 1/2^n is 1, and we let everyone have their own private intuitions as long as we agree to use the formalism.

-

Concerning Infinity (of course)

The notation says that the sum is defined as the limit of the partial sums. It's defined to be 1, the limit of the partial sums. That's actually the clever part of the definition. We can't make sense of "what is the sum after infinitely many operations?" or "Isn't there a tiny little bit left over?" and so forth. The limit definition avoids those problems by providing a precise definition of the sum of an infinite series.

-

Concerning Infinity (of course)

-

Concerning Infinity (of course)

The sum is defined as the limit of partial sums. So if we have 1/2 + 1/4 + 1/8 + 1/16 + ..., the partial sums are: 1/2, 3/4, 7/8, 15/16, ... The limit of the sequence of partial sums is 1. So by definition the sum of the original infinite sum is 1. It's explained here on Wiki. https://en.wikipedia.org/wiki/Series_(mathematics) "it is sometimes possible to assign a value to a series, called the sum of the series. This value is the limit as n tends to infinity (if the limit exists) of the finite sums of the n first terms of the series, which are called the nth partial sums of the series."

-

Curiosity about Infinite Sets

Yes it's very strange. Yes it is allowed. In fact it's the defining property of infinite sets. We can't do that with a finite set! One way to define an infinite set is to say that it's a set that can be placed into bijection with a proper subset of itself. Only infinite sets have that property. And yes it is strange! This was noticed by Galileo in 1638. He observed that we can correspond the natural numbers with the perfect squares: 0 <-> 0, 1 <->1, 2 <-> 4, 3 <-> 9, etc. So the whole numbers must at the same time be more numerous and equally numerous with the squares. https://en.wikipedia.org/wiki/Galileo's_paradox If he had only stuck to math he would not have gotten into trouble with the Pope. There's a lesson in there somewhere.

-

Curiosity about Infinite Sets

Here is what's going on. One day Alice eats a cheeseburger. The next day her vegetarian friend Bob says to Alice, "Alice, you are a meat eater." Alice indignantly replies: "But no, TODAY I have not eaten any meat. I only eat meat sometimes." And Bob explains that a meat eater is anyone who SOMETIMES eats meat. A vegetarian is someone who NEVER eats meat. If someone is not a vegetarian, they are a meat eater. Since Alice sometimes eats meat, she is clearly not a vegetarian. She is by definition a meat eater, by virtue of the fact that she SOMETIMES eats meat. Ok that's a bit of a shaggy dog story and if it's unclear I'll try to come up with a better example. But here is the relevant definition for our mathematical purposes: * Definition: Two sets are said to have the same cardinality if THERE EXISTS a function between them that is a bijection. The fact that there happen to be functions between the sets that are NOT bijections doesn't matter. All it takes is the existence of a single bijection to satisfy the definition. By this definition, we see that [math]\mathbb N[/math] and [math]\mathbb Z[/math] have the same cardinality. Because THERE EXISTS some function between them that is a bijection: namely, the function that corresponds them as follows: 0 <-> 0 1 <-> -1 2 <-> 1 3 <-> -2 4 <-> 2 5 <-> -3 6 <-> 3 and so forth. It is certainly the case that there are SOME functions between [math]\mathbb N[/math] and [math]\mathbb Z[/math] that are NOT bijections. But that doesn't matter. To have the same cardinality, there only needs to be a single bijection between the two sets; just as to be a meat eater, you only have to have one cheeseburger. Another example is a guy who is convicted of robbing a bank. For the rest of his life he'll be labeled a bank robber, even if he hasn't robbed a bank in years. Doing it once is enough to earn the label. Likewise, a single bijection between two sets is all it takes to declare the sets to have the same cardinality.

-

Suggestions for using AI

Ok first, note that you are not arguing with anything I've said. I presented an argument that some anti-car factions make. So you should take this up with them. But "too many roads already?" Well if you look at the gridlock around most major cities during rush hour, and you ADD a whole bunch of autonomous cars, it's pretty clear that we need MORE roads. That's the argument (which I'm reporting, not defending). That autonomous vehicles will increase demand for roads and decrease mass transit. The reason people want transit is that they don't want to drive their car to work. But if the car drives itself while they surf the net, they'll want cars and not transit. You'd see a lot of unintended consequences, one of which would be increased demand for roads. How can you say you want more autonomous vehicles, then say we have too many roads? When autonomous vehicles are ubiquitous, there won't be nearly enough roads. We'll have to pave over whatever green space is left. Unbelievable. You are still arguing about guns. You think I'm arguing about guns. I'm not. I don't care about guns. You are so obsessed with guns that you can't even read what I'm writing. If you were a pro-gun fanatic and you wanted to crush the houses of people opposed to guns, I'd still be calling you out on that. I don't care about the guns. I care about house-crushing as a tactic for people who hate actual democracy and the rule of law. So you want to crush the houses of law-abiding gun owners. And where does that stop? Maybe next week the mob wants to crush your house. Don't you think it's a problem when you operate society by violent mob rule organized against anybody that someone doesn't like? There's a famous quote from the play (and terrific film) A Man For All Seasons. "William Roper: So, now you give the Devil the benefit of law! Sir Thomas More: Yes! What would you do? Cut a great road through the law to get after the Devil? William Roper: Yes, I'd cut down every law in England to do that! Sir Thomas More: Oh? And when the last law was down, and the Devil turned 'round on you, where would you hide, Roper, the laws all being flat? This country is planted thick with laws, from coast to coast, Man's laws, not God's! And if you cut them down, and you're just the man to do it, do you really think you could stand upright in the winds that would blow then? Yes, I'd give the Devil benefit of law, for my own safety's sake!" Replace the Devil with gun owners and there's my eternal reply to you and everyone who thinks like you. Do you have a cat? Reason I ask is that sometimes cats type on keyboards when their human's not looking, in order to cause trouble. Did your cat type this? I ask you directly: Do you affirm or retract this quote and the sentiment it expresses? Do you deny writing it? Do you advocate smashing through the door and crushing the houses of people who exercise perfectly legal rights that happen to upset you? What's the difference between the attitude expressed here, and the lynch mobs of a hundred years ago? They were emotional too, and just as convinced of their own moral righteousness. Maybe you should just blame your cat for writing such a foolish and dangerous sentiment, which earlier you tried to pass off as a joke, and now want to pretend you never wrote. And whose houses will you smash until all the poor kids are fed? The catastrophe is destroying the law to smash the houses of people that the mob doesn't like this week. If you can't see that, you'll be surprised when the mob comes for you. Earlier I suggested studying the French revolution for a bloody datapoint, but the lesson seems to be lost on you. That was something about Elon Musk. Not my quote you're replying to. In other automated car news this week, a line of Cruise taxis lost connectivity due to a large music festival in San Francisco, and stopped dead in the street, tying up traffic in a busy night life area. Perfect illustration of the downside of centralized control of autonomous vehicles. What could possibly go wrong? https://www.nbcbayarea.com/news/local/cruise-cars-standstill-traffic-san-francisco-north-beach/3294264/

-

Suggestions for using AI

Yes you're right. I did go off the deep end into optimism (uncharacteristic of me) that autonomy and liberty would win. But the history of the world does not support my hope at all, let alone the world that we see around us. That was in response for my belief in democracy and autonomy. In that context I did not understand this remark. Perhaps I'm too optimistic about democracy too. I think my point was local control rather than centralized. In the 20th century we've seen the fate of the centralized systems: Mao and Stalin being two striking examples. Replacing a dictator with an AI is even worse. Who programs it? All algorithms have bias. Who are the ones in control? What a totalitarian nightmare. I am for local control wherever possible, and as much as possible. And I'm strongly against the idea of having giant computers run a centralized economy. My God, didn't anyone learn anything about the planned economies of China and Russia in the twentieth century? Central planning failed. People should take that into consideration when speculating on big electronic brains running the world or for that matter supporting world governments. Top-down autocracies don't work. Yes ok, sure. The point of anti-car people is the same. Instead of light rail, we'll be building more roads for autonomous trucks. And we won't need subways, we'll just have automated Ubers taking everyone around. The result would be less mass transit and more roads. It's not entirely the world we want to live in. I'm not necessarily making that argument myself, but I am noting that the argument could be made. Nice of you to grant Constitutional rights to SOME people, anyway. Can we see a list of the Constitutional rights you'll allow and which people you'll suspend them for so you can crush their houses? You did say crush their houses, did you not? The joke was a thin disguise for the obvious truth of how you feel about Constitutional rights in the face of laws you disagree with. Many people feel that way these days. It's the new leftist authoritarianism. I oppose it. Ahhhh, in the end the house-crushing is for the red hat brigade, the half of the country you don't like. You want to crush their houses. Got it. You disagree with their politics, AND you want to crush them as human beings. How do you think that kind of thinking is going to work out for all of us? I know it's very popular in some circles. It's dangerous. But gun rights are not the point. Whether you like guns or not, the second amendment is the law of the land. You have the opportunity to work to repeal it, or to locally nullify it with taxes and litigation. But you are interested in none of that. You want to crush the houses of the people who exercise legal rights that you don't like. I see that you are taking my words as gun advocacy. They should be taken as advocacy of following the law and following the Constitution, as opposed to simply wreaking violence on everyone you don't like. I actually have no interest in gun politics, no dog in the fight, no emotional interest one way or the other. I am passionate about having a civil, pluralistic society with respect for the law; as opposed to crushing the houses of people whose legal rights you simply don't like. You're ranting (pardon my frankness) about guns. You're so fanatical about the issue that you can't even see that I have no interest in guns and am not making a gun rights argument in the least. I'm standing for pluralism and respect for the law. I don't care if you start a crusade to overturn the second amendment. I do care that you want to skip the democratic process stage to get directly to the house crushing. That's a very dangerous impulse. That's the politics of a three year old. "I'm super emotional so I'll destroy things I don't like." An authoritarian three year old. Many people think and feel and act as you do these days. I'm concerned with that trend. That's why I'm calling it out. You're deadly serious about your authoritarian, anti-democratic desire to crush the houses of the people who have opinions and legal possessions that you don't like. Can you see that that's a very slippery slope for a society? Once you start crushing houses, what's going to keep your house standing up? See the French revolution for a bloody datapoint. Yes you are right, autonomy and democracy are in trouble, the only question is who gets to own everything.

-

Suggestions for using AI

Put the space shuttle on the street in a dense urban downtown, and see how it operates. Do you know what coning is? I just ran across this a while back. Anti-car activists in SF are against self-driving cars too, on the theory that wide adoption of self-driving cars reduces the political impetus for more and better transit. And actually that's a very good point, I wonder if the autonomous enthusiasts have thought about that? Why pay for buses and subways when everyone can just hop in a software-controlled Uber. So that's a social point to consider. I assume a lot of autonomous fans are also fans of transit. Autonomous cars are cars, and if you think we'd all be better off with fewer cars, autonomous vehicles are not the way to go. Just something to think about. So there are some anti-car activists in San Francisco called the Safe Street Rebels, I linked their website for anyone interested. https://www.safestreetrebel.com/ They have discovered that if you put an orange traffic cone on the hood of one of the experimental Cruise autonomous taxis cruising, so to speak, around town ... the vehicle stops dead in its tracks and can't move till someone from the Cruise company comes to reset it. This practice is called coning. Human ingenuity always finds a way around tech. I hope people will click on that link, there's a video of the group coning Cruise cars all over town. In the glorious one-size-fits-all future of the great AI brain that tells everyone exactly what they may do, there will always be people and groups hacking the system. I'm wondering, when @Peterkinis done busting down the doors of gun owners, will he bust down the doors of the members of Safe Street Rebels too? I was a little bit put off by the authoritarianism of that earlier remark and moved to push back if I may. It puts the AI-mind controlling all the cars in a very different light. Your car goes where the Big Electric Brain says it does, and we bust down the door of anyone exercising their Constitutional rights. You didn't suggest passing a referendum to tax guns out of existence. You didn't suggest a grass roots campaign to abolish the second amendment. Those are legitimate, democratic means of addressing your concerns about guns. But no, you just want to bust down the door of anyone whose opinion you disagree with. It sounds like your Big Electric Brain is just more authoritarianism and a lot less democracy. I'll take democracy and individual autonomy. The global control freaks will never win. I did want to add that many people wrote thoughtful replies to my link about the 18 deaths. My point is more that I oppose the centralization implied by the Big AI controller, and I put that together with the door kicking. That's more important to me than exactly how many people get killed. I'm perfectly well aware of the statistics on human drivers, about 100 a day in the US. And I agree that there are a lot of people who shouldn't be driving. If autonomous vehicles take some drunks off the road I'm all for it. It's the centralization and the authoritarianism that concern me. In fact even from an engineering point of view, I think a distributed network of autonomous vehicles is far preferable to a Big Brain controller architecture. For one thing, the latter is a single point of failure. When it glitches the entire country glitches. And it would be a target for every cyberhacker in the world. Banks, health care services, even cities have had to pay off cyberattackers because the attackers can not be defeated. Imagine one single big computer system running all the transportation in the country. A huge disaster waiting to happen.

-

problem with cantor diagonal argument

I've refrained from making any personal remarks, preferring to concentrate on the math and on the mathematical philosophy of the early twentieth century, in particular Brouwer's intuitionism and Russell's logicism. But if you insist ...

-

Suggestions for using AI

Now that's funny. But enough about the autonomous cars

-

Suggestions for using AI

Authoritarian much? I push back gently on your dream to have a computer running our entire transportation system and you reply by fantasizing about breaking down citizens' doors because they're exercising Constitutional rights you don't happen to agree with? What else have you got planned for us?

-

Suggestions for using AI

18 confirmed autonomous vehicle deaths so far and counting. The evidence does not support your hope. https://www.slashgear.com/1202594/how-many-people-have-actually-been-killed-by-self-driving-cars/