-

Are there cosmic sources of negative radiation pressure?

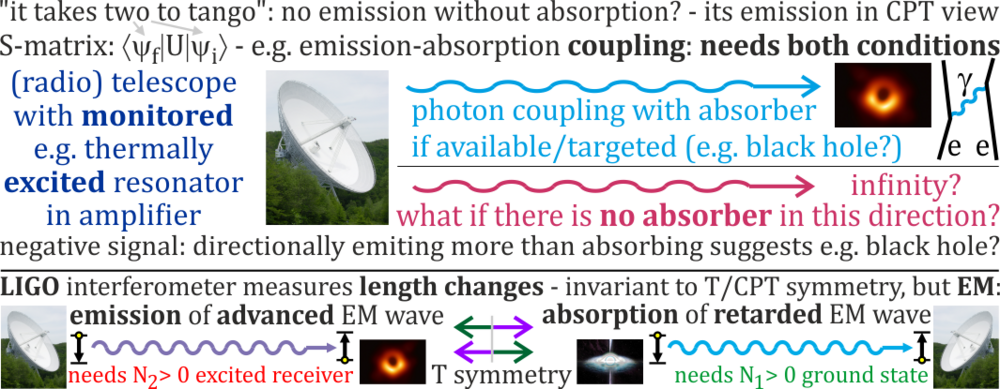

So how do you interpret these huge negative regions in radio flux maps from https://iopscience.iop.org/article/10.3847/1538-4357/ac0e93/pdf ? Don't radiotelescopes measure energy balance: positive if absorbed, negative if emitted? Doesn't S-matrix <psi_f |U| psi_i> say photon exchange depend on both emitter in psi_f, but also absorber in psi_i?

-

Are there cosmic sources of negative radiation pressure?

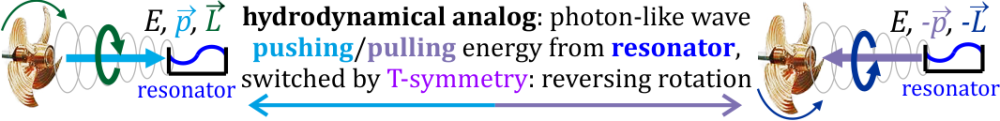

Positive signal means telescope absorbs energy from source, so seems negative means telescope emits energy? Like for wave behind marine propeller: carrying energy, momentum, and angular momentum - could excite resonator, but reversing rotation it could cause its deexcitation: There is also mechanical analog - coupled oscillators periodically exchange energy like Rabi cycles, what would be stopped without one acting as absorber. In astronomy such absorber might be e.g. black hole, emitter in telescope. Anyway, they clearly see negative signals e.g. in this Fig. 1 from https://iopscience.iop.org/article/10.3847/1538-4357/ac0e93/pdf , usually saying this is just noise ... but these are huge regions of similar luminosity but reversed sign - maybe hypothesis of actually being positive could be verified? Or if negativity would remain, we should try to finally understand it ...

-

Are there cosmic sources of negative radiation pressure?

Radiation pressure is p=<ExB>/c vector: there is focus on positive, but can be also negative: https://scholar.google.pl/scholar?q=negative+radiation+pressure , https://scholar.google.pl/scholar?q=optical+pulling If positive radiation pressure gives positive signal in radiotelescopes, shouldn't negative give negative? They clearly see also large regions of negative signal in radio flux maps, e.g. below from https://arxiv.org/pdf/2107.02695 What objects could generate negative radiation pressure? E.g. if white hole would generate positive, shouldn't black holes generate negative?

-

-

How Spin of Elementary Particles Sources Gravity Question

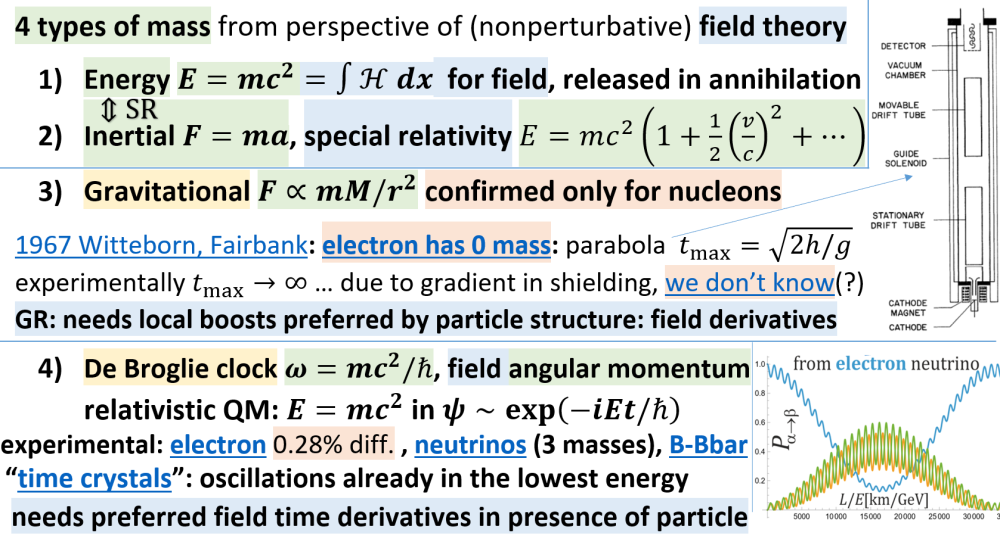

Sorry should be https://journals.aps.org/prl/abstract/10.1103/PhysRevLett.19.1049 2015 slides: https://indico.cern.ch/event/361413/contributions/1776296/attachments/1137816/1628821/WAG2015.pdf In summary, for electron we only assume - would be great to finally test it.

-

How Spin of Elementary Particles Sources Gravity Question

We don't even know gravitational mass of electron - experiment returned zero ( https://journals.aps.org/pr/abstract/10.1103/PhysRev.151.1067 ), but it was due to charge gradient in shielding, and seems it was not repeated right. Spin is mostly related with angular momentum - of actually rotating field (not point), what also can be viewed as clock - directly confirmed for electron ( https://link.springer.com/article/10.1007/s10701-008-9225-1 ), also neutrinos oscillate. Here is a field theoretic mechanism to propel it: https://arxiv.org/pdf/2501.04036

-

Wheeler-Feynman absorber theory vs Asymmetry of Radiation?

Maxwell equations have both retarded and advanced solutions, also their convex combinations are allowed: Wheeler-Feynman assumes symmetric 1/2-1/2 contributions, but e.g. energy loss shows asymmetry instead - which should depend on the boundary conditions, e.g. Huw Price says it is because of more absorbers than emitters ( https://link.springer.com/article/10.1007/BF00733218 ). Seems nearly everybody assume 1-0 only retarded instead, but maybe it is worth to verify experimentally, especially that emitter/absorber imbalance might not be perfect? ( https://arxiv.org/pdf/2512.20692 ) Where do you think this Asymmetry of Radiation comes from? Should it be really perfect 1-0? How to test it experimentally, distinguish from e.g. 0.999999-0.000001?

-

Why we observe only retarded gravitational waves, not advanced?

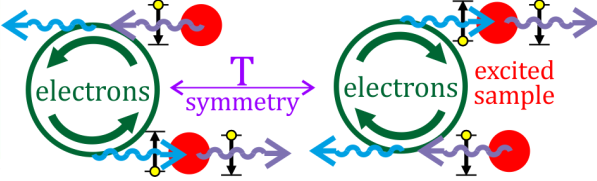

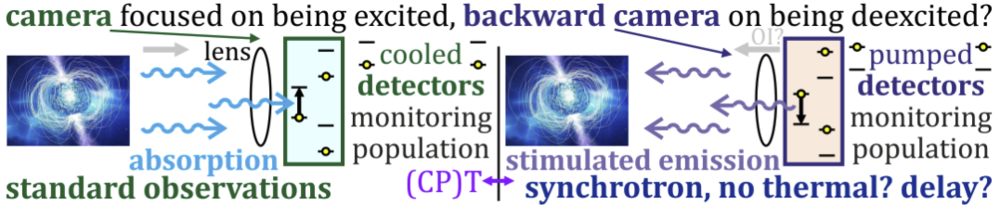

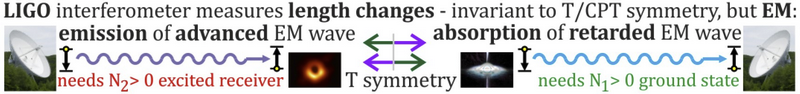

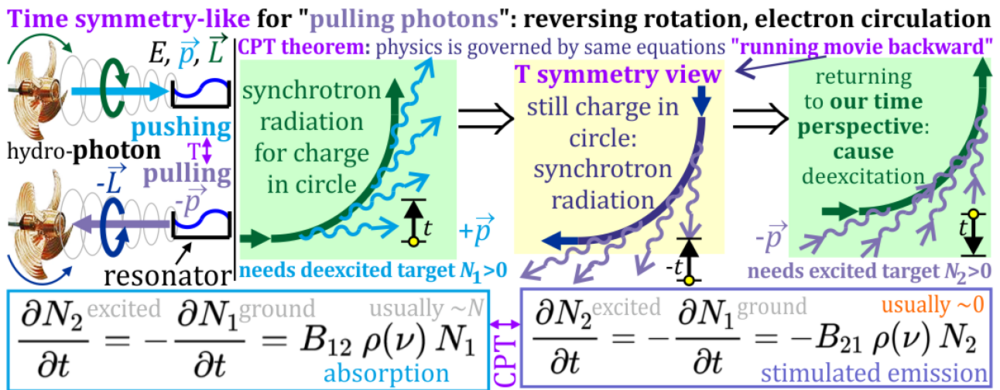

For both marine propeller and shooting e.g. free electron laser, indeed we don't control the details of individual particles, only their statistical behavior ... but still allowing to cause excitation of the target - by retarded EM wave. Applying T/CPT symmetry: reversing rotation of marine propeller, or reversing electron trajectory of free electron laser/synchrotron, the causation should reverse to causing deexcitation of target - by advanced EM wave ... with requirement that this target was initially excited, what is not true e.g. for most radio telescopes - preventing them from observation of advanced waves. Applying time symmetry to synchrotron:

-

Why we observe only retarded gravitational waves, not advanced?

Sure it seems highly suspicious that, among ~300 GM events, there was observed only single EM counterpart - including advanced waves into considerations could help explain. The current EM counterparts are retarded - being certain their non-existence when required, should indicate it was advanced GW (?) To have a chance to observe advanced EM counterparts, we would need telescopes with excited sensor - currently avoided by cooling.

-

Why we observe only retarded gravitational waves, not advanced?

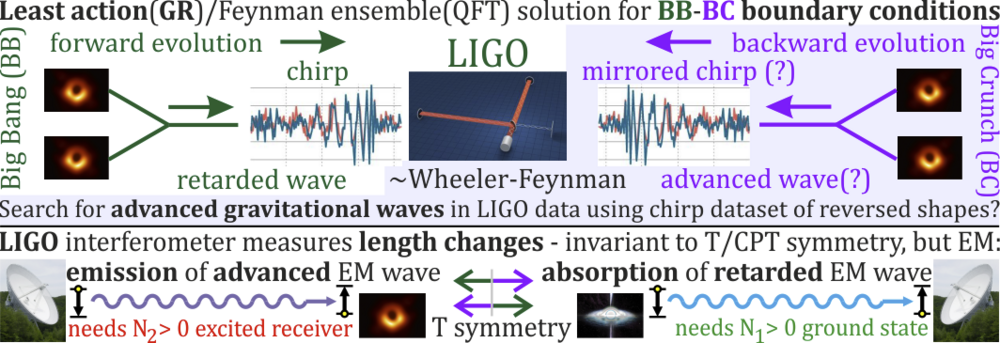

Sure there is also statistics there, but stimulated - especially in superradiance, also in laser ... or wave behind marine propeller pushing or pulling energy from resonator is quite deterministic. For example white hole would emit, causing excitation in sensor of telescope. Applying T/CPT symmetry to this scenario, shouldn't black hole cause deexcitation of telescope sensor if prepared as excited? GR is solved by the least action principle - treating spacetime as 4D membrane minimizing tension as action - based on boundary conditions in both time directions. If there are e.g. orbiting supermassive black holes there, shouldn't distortions they create propagate in both directions of this 4D membrane? (for https://theconversation.com/to-map-the-vibration-of-the-universe-astronomers-built-a-detector-the-size-of-the-galaxy-244157 ) Solving GR by least action, QFT by Feynman ensembles is CPT symmetric, requires Einstein's block universe philosophy of time - that we travel through already found 4D solution ... e.g. in S-matrix: <psi_f | U | psi_i> with one amplitude coming from the past, second from the future, we multiply them e.g. in Born rule - allowing for very nonintuitive Bell violation.

-

Why we observe only retarded gravitational waves, not advanced?

Thermodynamics is for spontaneous emission, while here we are talking about stimulated. It is valuable to think about hydrodynamical analog: wave behind marine propeller carries energy, momentum and angular momentum - like photon. We can practically apply time symmetry - just reversing its rotation: getting pulling photon, causing deexcitation of target. Going to similar EM, we can analogously apply time symmetry to synchrotron radiation - just reversing electron trajectory, this way switching absorption and stimulated emission acting on target:

-

Why we observe only retarded gravitational waves, not advanced?

There is Feynman diagram photon exchange between coupled e.g. two electrons - current cooled EM receivers are focused on retarded: such that photon was emitted by target, absorbed by sensor ... for advanced you need CPT version of this diagram - reversed coupling: photon emitted by receiver, absorbed by target.

-

Why we observe only retarded gravitational waves, not advanced?

EM receivers are focused on absorption - of retarded wave. For advanced you need its time symmetric analog - receiver focused on emission, what requires its initial excitation ... usually avoided e.g. by cooling of radio telescope amplifier.

-

Why we observe only retarded gravitational waves, not advanced?

Sure, while being older than the age of the Universe would be a nice argument for advanced, luminosity distance is only one of 3 types of distances they are using, being only suggestion ... it is the last argument I have used, the remaining 3 are much stronger. The clearest would be certain lack of (retarded) EM counterpart when required - if excluding retarded, wouldn't we have to consider it was advanced? Such cases might be a matter of months now. What other ways could we use to distinguish retarded from advanced? Observing "too early to happen events" like with black hole mass gap seems such a way - could e.g. this 66 + 85 -> 142 merger be advanced wave? Then there are Timing Pulsar Arrays seeing vibrations of the Universe - claiming they require orbiting SMBH ... if retarded seem insufficient, couldn't they be also advanced? Any other arguments for/against advanced GW?

-

Why we observe only retarded gravitational waves, not advanced?

You have written that we are testing - if retarded vs advanced, how is it done? For GR there is used the least action principle. Gathered some more arguments. As the main source of gravitational wave events is just orbiting of e.g. two black holes, and evolving toward minus time orbiting remains orbiting, so using Euler-Lagrange toward minus time (t -> -t), or the least action principle, there should be generated similar waves - for us being advanced of similar chirp shape as retarded. LIGO just measures lengths - invariant to time symmetry, so should see both retarded and advanced waves. Therefore, maybe some of current ~300 events ( https://catalog.cardiffgravity.org/ ) could turn out advanced? Some arguments: - ultimate confirmation should be certain lack of (retarded) EM counterpart when required (e.g. neutron star merger), still only 1 per ~300 observed, leaving advanced wave possibility (?), - some events are believed to happen too early, like 66 + 85 -> 142 merger starting in 50-120 black hole Mass Gap, e.g. https://www.symmetrymagazine.org/ar...st-scale-could-explain-impossible-black-holes - advanced would have more time, - pulsar arrays show vibrations of the Universe requiring more than expected orbiting supermassive black holes - https://theconversation.com/to-map-...uilt-a-detector-the-size-of-the-galaxy-244157 - advanced could add them, - the largest observed luminosity distance is ~27Gly: twice the age of the Universe - maybe it is worth to consider advanced?

-

Why we observe only retarded gravitational waves, not advanced?

Sure, e.g. throwing a rock to a lake symmetric in equations, there appear asymmetries of solutions ... for physics we know many such asymmetries , like entropy gradient, emission asymmetry (e.g. circulating electron losing energy), tendency for black hole formation from direction of Big Bang. But solving physics by the least action(GR)/Feynman ensemble(QFT) using e.g. boundary conditions in Big Bang and Big Crunch, they seem very similar just hot soups - symmetric ways of solving from symmetric boundary conditions, shouldn't the solution be also symmetric? Reversing e.g. mentioned 3 asymmetries near Big Crunch, like in diagram above ( https://scienceforums.net/topic/140178-why-we-observe-only-retarded-gravitational-waves-not-advanced/#findComment-1304506 )? Both DESI observations and revisiting of supernova data (e.g. Sabine's https://www.youtube.com/watch?v=2VpP-qXuJMc ) suggest slowing down acceleration ... but sure Big Crunch is only hypothesis now, just convening to get intuitions - valuable also if it is not true. Least action GR is deterministic, but sure there is also QFT - solved e.g. by <psi_f |U| psi_i> S-matrix, also using boundary conditions in both time directions, between them assuming Feynman ensemble - e.g. of histories of Universe between Big Bang and Big Crunch. Returning to the topic, LIGO measures lengths - which are T/CPT invariant, so in theory should also see advances waves ... if only there are such events, scientists should test instead of assuming, but the big question is what to search for e.g. in LIGO data? Reversed chirps is just a first guess ... In contrast, EM receivers are rather focused on absorption - of retarded waves. For advanced would need to be sensitive for stimulated emission - requiring excitation of sensor, what is rarely used now.